Monitoring strategies for continuous evaluation of deployed clinical prediction models

IF 4.5

2区 医学

Q2 COMPUTER SCIENCE, INTERDISCIPLINARY APPLICATIONS

引用次数: 0

Abstract

Objective:

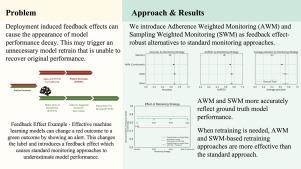

As machine learning adoption in clinical practice continues to grow, deployed classifiers must be continuously monitored and updated (retrained) to protect against data drift that stems from inevitable changes, including evolving medical practices and shifting patient populations. However, successful clinical machine learning classifiers will lead to a change in care which may change the distribution of features, labels, and their relationship. For example, “high risk” cases that were correctly identified by the model may ultimately get labeled as “low risk” thanks to an intervention prompted by the model’s alert. Classifier surveillance systems naive to such deployment-induced feedback loops will estimate lower model performance and lead to degraded future classifier retrains. The objective of this study is to simulate the impact of these feedback loops, propose feedback aware monitoring strategies as a solution, and assess the performance of these alternative monitoring strategies through simulations.

Methods:

We propose Adherence Weighted and Sampling Weighted Monitoring as two feedback loop-aware surveillance strategies. Through simulation we evaluate their ability to accurately appraise post deployment model performance and to initiate safe and accurate classifier retraining.

Results:

Measured across accuracy, area under the receiver operating characteristic curve, average precision, brier score, expected calibration error, F1, precision, sensitivity, and specificity, in the presence of feedback loops, Adherence Weighted and Sampling Weighted strategies have the highest fidelity to the ground truth classifier performance while standard approaches yield the most inaccurate estimations. Furthermore, in simulations with true data drift, retraining using standard unweighted approaches results in a AUROC score of 0.52 (drop from 0.72). In contrast, retraining based on Adherence Weighted and Sampling Weighted strategies recover performance to 0.67 which is comparable to what a new model trained from scratch on the existing and shifted data would obtain.

Conclusion:

Compared to standard approaches, Adherence Weighted and Sampling Weighted strategies yield more accurate classifier performance estimates, measured according to the no-treatment potential outcome. Retraining based on these strategies bring stronger performance recovery when tested against data drift and feedback loops than do standard approaches.

对部署的临床预测模型进行持续评估的监测策略。

随着机器学习在临床实践中的应用不断增长,必须不断监测和更新(再培训)已部署的分类器,以防止因不可避免的变化(包括不断发展的医疗实践和不断变化的患者群体)而导致的数据漂移。然而,成功的临床机器学习分类器将导致护理的变化,这可能会改变特征、标签及其关系的分布。例如,被模型正确识别的“高风险”案例可能最终被标记为“低风险”,这要归功于模型警报提示的干预。对这种部署诱导的反馈回路幼稚的分类器监视系统将估计较低的模型性能,并导致未来分类器再训练的退化。本研究的目的是模拟这些反馈回路的影响,提出反馈感知监控策略作为解决方案,并通过模拟评估这些替代监控策略的性能。方法:提出了依从性加权监测和抽样加权监测两种反馈环感知监测策略。通过仿真,我们评估了他们准确评估部署后模型性能和启动安全准确的分类器再训练的能力。结果:测量精度,接收器工作特征曲线下面积,平均精度,brier评分,预期校准误差,F1,精度,灵敏度和特异性,在存在反馈环路的情况下,粘附加权和抽样加权策略对地面真实分类器性能具有最高的保真度,而标准方法产生最不准确的估计。此外,在真实数据漂移的模拟中,使用标准未加权方法进行再训练的AUROC得分为0.52(从0.72下降)。相比之下,基于依从性加权和抽样加权策略的再训练将性能恢复到0.67,这与在现有和转移的数据上从头开始训练的新模型所获得的性能相当。结论:与标准方法相比,依从性加权和抽样加权策略产生更准确的分类器性能估计,根据无治疗的潜在结果来衡量。在针对数据漂移和反馈循环进行测试时,基于这些策略的再训练比标准方法带来更强的性能恢复。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Journal of Biomedical Informatics

医学-计算机:跨学科应用

CiteScore

8.90

自引率

6.70%

发文量

243

审稿时长

32 days

期刊介绍:

The Journal of Biomedical Informatics reflects a commitment to high-quality original research papers, reviews, and commentaries in the area of biomedical informatics methodology. Although we publish articles motivated by applications in the biomedical sciences (for example, clinical medicine, health care, population health, and translational bioinformatics), the journal emphasizes reports of new methodologies and techniques that have general applicability and that form the basis for the evolving science of biomedical informatics. Articles on medical devices; evaluations of implemented systems (including clinical trials of information technologies); or papers that provide insight into a biological process, a specific disease, or treatment options would generally be more suitable for publication in other venues. Papers on applications of signal processing and image analysis are often more suitable for biomedical engineering journals or other informatics journals, although we do publish papers that emphasize the information management and knowledge representation/modeling issues that arise in the storage and use of biological signals and images. System descriptions are welcome if they illustrate and substantiate the underlying methodology that is the principal focus of the report and an effort is made to address the generalizability and/or range of application of that methodology. Note also that, given the international nature of JBI, papers that deal with specific languages other than English, or with country-specific health systems or approaches, are acceptable for JBI only if they offer generalizable lessons that are relevant to the broad JBI readership, regardless of their country, language, culture, or health system.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: