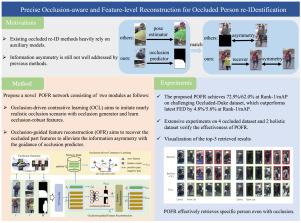

Precise occlusion-aware and feature-level reconstruction for occluded person re-identification

IF 5.5

2区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

Abstract

Occluded person re-IDentification (re-ID) is a challenging task in surveillance scenarios that remains unresolved. To address it, existing methods primarily rely on auxiliary models, e.g. pose estimation, to explore visible parts by detecting human keypoints. However, these approaches inevitably encounter two issues: domain gap and information asymmetry. The former arises from pre-training auxiliary models on different domains, while the latter indicates that the occluded query has asymmetric valid cues compared to the holistic visible gallery. In this paper, we propose a novel Precise Occlusion-aware and Feature-level Reconstruction (POFR) network for occluded re-ID. POFR addresses the occlusion issue from two viewpoints: perceiving the occlusions other than visible human bodies and reconstructing the occluded parts at the feature level. The first perspective is achieved through occlusion-driven contrastive learning (OCL). OCL incorporates an occlusion generator capable of generating object and person-specific occlusions. Unlike previous coarse occlusions, our generator leverages segmented pedestrians and obstacles to generate realistic occlusions which are then used for contrastive learning. The second perspective is implemented through an occlusion-guided feature reconstruction (OFR) module. OFR initially learns an occlusion predictor to estimate the occlusion mask, which is subsequently utilized to recover features corresponding to the occluded regions. Benefiting from the occlusion generator, the occlusion predictor can be effectively supervised with the precise occlusion masks, thereby mitigating the domain gap problem. Additionally, the recovered features alleviate information asymmetry when matching an occluded query and a holistic gallery. Extensive experiments conducted on occluded, partial, and holistic datasets demonstrate the superior performance of our POFR over state-of-the-art methods. The source code will be made publicly available upon paper acceptance.

精确的闭塞感知和特征级重构用于闭塞人再识别

在监控场景中,被遮挡者的再识别(re-ID)是一项具有挑战性的任务,尚未解决。为了解决这个问题,现有的方法主要依赖于辅助模型,例如姿态估计,通过检测人体关键点来探索可见部分。然而,这些方法不可避免地遇到两个问题:领域差距和信息不对称。前者来自于对不同域的辅助模型的预训练,而后者表明与整体可见库相比,被遮挡的查询具有不对称的有效线索。在本文中,我们提出了一种新的精确闭塞感知和特征级重建(POFR)网络。POFR从两个角度解决遮挡问题:感知除可见人体之外的遮挡和在特征层面重建被遮挡的部分。第一个视角是通过闭塞驱动的对比学习(OCL)实现的。OCL包含一个能够生成物体和个人特定遮挡的遮挡生成器。与之前的粗遮挡不同,我们的生成器利用分段的行人和障碍物来生成逼真的遮挡,然后用于对比学习。第二个视角是通过遮挡引导特征重建(OFR)模块实现的。OFR首先学习遮挡预测器来估计遮挡掩模,然后利用遮挡掩模来恢复被遮挡区域对应的特征。借助遮挡生成器,遮挡预测器可以使用精确的遮挡掩模进行有效的监督,从而减轻了域间隙问题。此外,在匹配闭塞查询和整体图库时,恢复的特征减轻了信息不对称。在遮挡、部分和整体数据集上进行的大量实验表明,我们的POFR优于最先进的方法。源代码将在论文接受后公开提供。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Neurocomputing

工程技术-计算机:人工智能

CiteScore

13.10

自引率

10.00%

发文量

1382

审稿时长

70 days

期刊介绍:

Neurocomputing publishes articles describing recent fundamental contributions in the field of neurocomputing. Neurocomputing theory, practice and applications are the essential topics being covered.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: