Word-Sequence Entropy: Towards uncertainty estimation in free-form medical question answering applications and beyond

IF 7.5

2区 计算机科学

Q1 AUTOMATION & CONTROL SYSTEMS

Engineering Applications of Artificial Intelligence

Pub Date : 2024-11-15

DOI:10.1016/j.engappai.2024.109553

引用次数: 0

Abstract

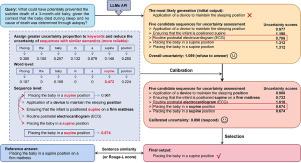

Uncertainty estimation is crucial for the reliability of safety-critical human and artificial intelligence (AI) interaction systems, particularly in the domain of healthcare engineering. However, a robust and general uncertainty measure for free-form answers has not been well-established in open-ended medical question-answering (QA) tasks, where generative inequality introduces a large number of irrelevant words and sequences within the generated set for uncertainty quantification (UQ), which can lead to biases. This paper proposes Word-Sequence Entropy (WSE), which calibrates uncertainty at both the word and sequence levels based on semantic relevance, highlighting keywords and enlarging the generative probability of trustworthy responses when performing UQ. We compare WSE with six baseline methods on five free-form medical QA datasets, utilizing seven popular large language models (LLMs), and demonstrate that WSE exhibits superior performance in accurate UQ under two standard criteria for correctness evaluation. Additionally, in terms of the potential for real-world medical QA applications, we achieve a significant enhancement (e.g., a 6.36% improvement in model accuracy on the COVID-QA dataset) in the performance of LLMs when employing responses with lower uncertainty that are identified by WSE as final answers, without requiring additional task-specific fine-tuning or architectural modifications.

词序熵:在自由格式医学问题解答应用及其他应用中实现不确定性估计

不确定性估计对于安全关键型人类和人工智能(AI)交互系统的可靠性至关重要,尤其是在医疗保健工程领域。然而,在开放式医疗问题解答(QA)任务中,针对自由形式答案的稳健且通用的不确定性度量方法尚未得到很好的确立,因为在不确定性量化(UQ)的生成集合中,生成不等式引入了大量不相关的单词和序列,这可能会导致偏差。本文提出了单词-序列熵(WSE),它能根据语义相关性在单词和序列层面校准不确定性,在进行不确定性量化时突出关键词并扩大可信回答的生成概率。我们利用七种流行的大型语言模型(LLM),在五个自由形式的医疗质量保证数据集上比较了 WSE 和六种基线方法,结果表明 WSE 在两个标准的正确性评估标准下,在准确的 UQ 方面表现出更优越的性能。此外,就实际医疗质量保证应用的潜力而言,当采用 WSE 识别出的不确定性较低的回答作为最终答案时,我们显著提高了 LLM 的性能(例如,在 COVID-QA 数据集上模型准确率提高了 6.36%),而无需额外的特定任务微调或架构修改。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Engineering Applications of Artificial Intelligence

工程技术-工程:电子与电气

CiteScore

9.60

自引率

10.00%

发文量

505

审稿时长

68 days

期刊介绍:

Artificial Intelligence (AI) is pivotal in driving the fourth industrial revolution, witnessing remarkable advancements across various machine learning methodologies. AI techniques have become indispensable tools for practicing engineers, enabling them to tackle previously insurmountable challenges. Engineering Applications of Artificial Intelligence serves as a global platform for the swift dissemination of research elucidating the practical application of AI methods across all engineering disciplines. Submitted papers are expected to present novel aspects of AI utilized in real-world engineering applications, validated using publicly available datasets to ensure the replicability of research outcomes. Join us in exploring the transformative potential of AI in engineering.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: