Cross-modal recipe retrieval based on unified text encoder with fine-grained contrastive learning

IF 7.2

1区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

Abstract

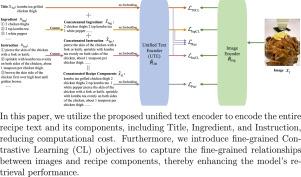

Cross-modal recipe retrieval is vital for transforming visual food cues into actionable cooking guidance, making culinary creativity more accessible. Existing methods separately encode the recipe Title, Ingredient, and Instruction using different text encoders, then aggregate them to obtain recipe feature, and finally match it with encoded image feature in a joint embedding space. These methods perform well but require significant computational cost. In addition, they only consider matching the entire recipe and the image but ignore the fine-grained correspondence between recipe components and the image, resulting in insufficient cross-modal interaction. To this end, we propose Unified Text Encoder with Fine-grained Contrastive Learning (UTE-FCL) to achieve a simple but efficient model. Specifically, in each recipe, UTE-FCL first concatenates each of the Ingredient and Instruction texts composed of multiple sentences as a single text. Then, it connects these two concatenated texts with the original single-phrase Title to obtain the concatenated recipe. Finally, it encodes these three concatenated texts and the original Title by a Transformer-based Unified Text Encoder (UTE). This proposed structure greatly reduces the memory usage and improves the feature encoding efficiency. Further, we propose fine-grained contrastive learning objectives to capture the correspondence between recipe components and the image at Title, Ingredient, and Instruction levels by measuring the mutual information. Extensive experiments demonstrate the effectiveness of UTE-FCL compared to existing methods.

基于统一文本编码器和细粒度对比学习的跨模态食谱检索

跨模态食谱检索对于将视觉食物线索转化为可操作的烹饪指导至关重要,它使烹饪创意更易实现。现有的方法使用不同的文本编码器分别对菜谱标题、配料和说明进行编码,然后将其汇总以获得菜谱特征,最后在联合嵌入空间中将其与编码的图像特征进行匹配。这些方法性能良好,但需要大量计算成本。此外,它们只考虑了整个菜谱与图像的匹配,却忽略了菜谱组成部分与图像之间的细粒度对应关系,导致跨模态交互不足。为此,我们提出了具有细粒度对比学习功能的统一文本编码器(UTE-FCL),以实现简单而高效的模型。具体来说,在每个食谱中,UTE-FCL 首先将由多个句子组成的 "成分 "文本和 "说明 "文本串联为一个文本。然后,它将这两个串联文本与原始的单句标题连接起来,得到串联食谱。最后,它通过基于变换器的统一文本编码器(UTE)对这三个串联文本和原始标题进行编码。这种结构大大减少了内存使用量,提高了特征编码效率。此外,我们还提出了细粒度对比学习目标,通过测量互信息来捕捉食谱成分与图像在标题、成分和说明层面的对应关系。大量实验证明,与现有方法相比,UTE-FCL 非常有效。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Knowledge-Based Systems

工程技术-计算机:人工智能

CiteScore

14.80

自引率

12.50%

发文量

1245

审稿时长

7.8 months

期刊介绍:

Knowledge-Based Systems, an international and interdisciplinary journal in artificial intelligence, publishes original, innovative, and creative research results in the field. It focuses on knowledge-based and other artificial intelligence techniques-based systems. The journal aims to support human prediction and decision-making through data science and computation techniques, provide a balanced coverage of theory and practical study, and encourage the development and implementation of knowledge-based intelligence models, methods, systems, and software tools. Applications in business, government, education, engineering, and healthcare are emphasized.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: