Real-time sign language detection: Empowering the disabled community

Abstract

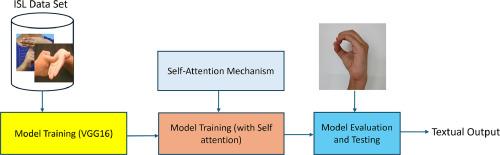

Interaction and communication for normal human beings are easier than for a person with disabilities like speaking and hearing who may face communication problems with other people. Sign Language helps reduce this communication gap between a normal and disabled person. The prior solutions proposed using several deep learning techniques, such as Convolutional Neural Networks, Support Vector Machines, and K-Nearest Neighbors, have either demonstrated low accuracy or have not been implemented as real-time working systems. This system addresses both issues effectively. This work extends the difficulties faced while classifying the characters in Indian Sign Language(ISL). It can identify a total of 23 hand poses of the ISL. The system uses a pre-trained VGG16 Convolution Neural Network(CNN) with an attention mechanism. The model's training uses the Adam optimizer and cross-entropy loss function. The results demonstrate the effectiveness of transfer learning for ISL classification, achieving an accuracy of 97.5 % with VGG16 and 99.8 % with VGG16 plus attention mechanism.

- •

Enabling quick and accurate sign language recognition with the help of trained model VGG16 with an attention mechanism.

- •

The system does not require any external gloves or sensors, which helps to eliminate the need for physical sensors while simplifying the process with reduced costs.

- •

Real-time processing makes the system more helpful for people with speaking and hearing disabilities, making it easier for them to communicate with other humans.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: