Reconciling privacy and accuracy in AI for medical imaging

IF 18.8

1区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

Abstract

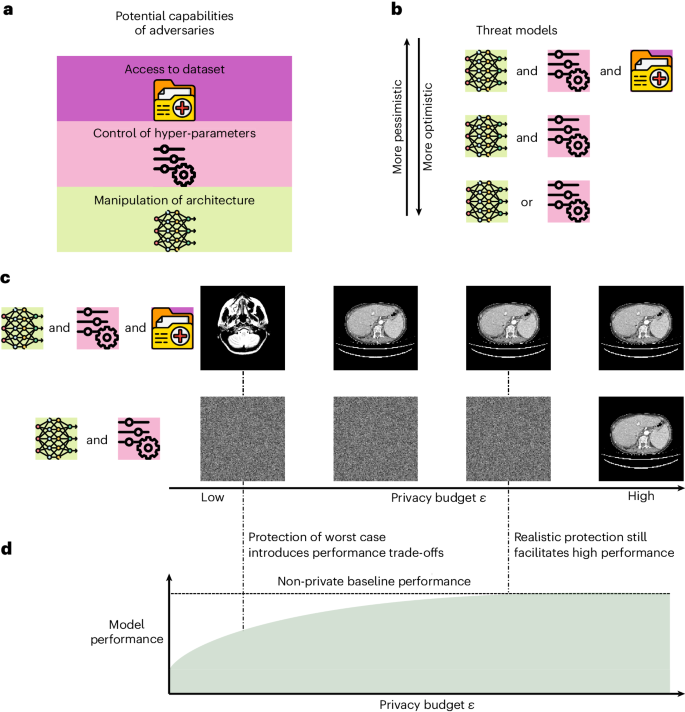

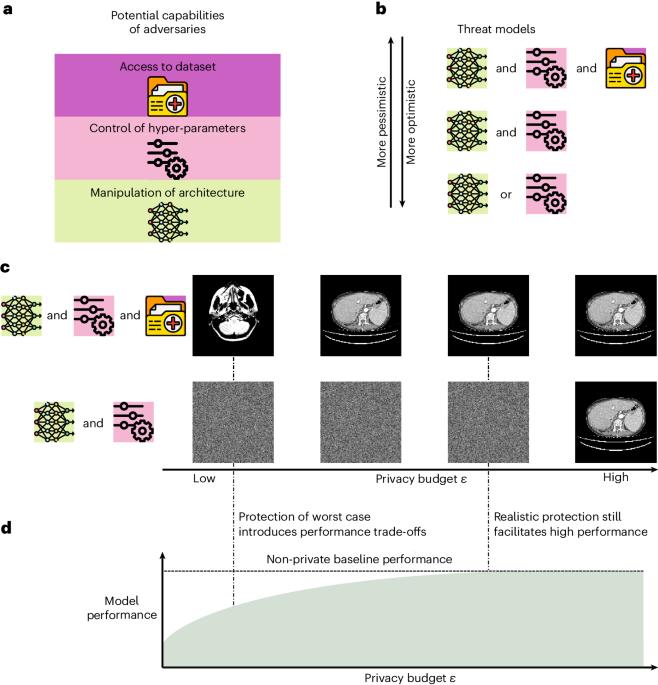

Artificial intelligence (AI) models are vulnerable to information leakage of their training data, which can be highly sensitive, for example, in medical imaging. Privacy-enhancing technologies, such as differential privacy (DP), aim to circumvent these susceptibilities. DP is the strongest possible protection for training models while bounding the risks of inferring the inclusion of training samples or reconstructing the original data. DP achieves this by setting a quantifiable privacy budget. Although a lower budget decreases the risk of information leakage, it typically also reduces the performance of such models. This imposes a trade-off between robust performance and stringent privacy. Additionally, the interpretation of a privacy budget remains abstract and challenging to contextualize. Here we contrast the performance of artificial intelligence models at various privacy budgets against both theoretical risk bounds and empirical success of reconstruction attacks. We show that using very large privacy budgets can render reconstruction attacks impossible, while drops in performance are negligible. We thus conclude that not using DP at all is negligent when applying artificial intelligence models to sensitive data. We deem our results to lay a foundation for further debates on striking a balance between privacy risks and model performance. Ziller and colleagues present a balanced investigation of the trade-off between privacy and performance when training artificially intelligent models for medical imaging analysis tasks. The authors evaluate the use of differential privacy in realistic threat scenarios, leading to their conclusion to promote the use of differential privacy, but implementing it in a manner that also retains performance.

协调医学影像人工智能的隐私和准确性

人工智能(AI)模型很容易受到训练数据信息泄露的影响,而训练数据可能是高度敏感的,例如在医学成像中。隐私增强技术,如差分隐私(DP),旨在规避这些敏感性。DP 是对训练模型可能提供的最强保护,同时限制了推断训练样本或重建原始数据的风险。DP 通过设置可量化的隐私预算来实现这一目标。虽然较低的预算会降低信息泄露的风险,但通常也会降低此类模型的性能。这就需要在强大的性能和严格的隐私保护之间做出权衡。此外,对隐私预算的解释仍然是抽象的,难以具体化。在此,我们将人工智能模型在不同隐私预算下的性能与理论风险界限和重构攻击的经验成功率进行对比。我们的研究表明,使用非常大的隐私预算可以使重构攻击变得不可能,而性能的下降可以忽略不计。因此,我们得出结论,在将人工智能模型应用于敏感数据时,完全不使用 DP 是可以忽略不计的。我们认为,我们的研究结果为进一步讨论如何在隐私风险和模型性能之间取得平衡奠定了基础。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Nature Machine Intelligence

Multiple-

CiteScore

36.90

自引率

2.10%

发文量

127

期刊介绍:

Nature Machine Intelligence is a distinguished publication that presents original research and reviews on various topics in machine learning, robotics, and AI. Our focus extends beyond these fields, exploring their profound impact on other scientific disciplines, as well as societal and industrial aspects. We recognize limitless possibilities wherein machine intelligence can augment human capabilities and knowledge in domains like scientific exploration, healthcare, medical diagnostics, and the creation of safe and sustainable cities, transportation, and agriculture. Simultaneously, we acknowledge the emergence of ethical, social, and legal concerns due to the rapid pace of advancements.

To foster interdisciplinary discussions on these far-reaching implications, Nature Machine Intelligence serves as a platform for dialogue facilitated through Comments, News Features, News & Views articles, and Correspondence. Our goal is to encourage a comprehensive examination of these subjects.

Similar to all Nature-branded journals, Nature Machine Intelligence operates under the guidance of a team of skilled editors. We adhere to a fair and rigorous peer-review process, ensuring high standards of copy-editing and production, swift publication, and editorial independence.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: