Knowledge driven indoor object-goal navigation aid for visually impaired people

IF 1.3

Q4 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 1

Abstract

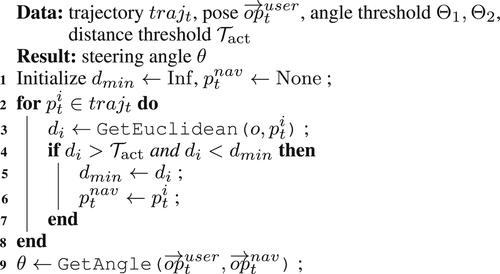

Aiming to help improve quality of life of the visually impaired people, this paper presents a novel wearable aid in the shape of a helmet for helping them find objects in indoor scenes. An object-goal navigation system based on a wearable device is developed, which consists of four modules: object relation prior knowledge (ORPK), perception, decision and feedback. To make the aid also work well in unfamiliar environment, ORPK is used for sub-goal inference to help the user find the target goal. And a method that learns the ORPK from unlabelled images by utilising a scene graph and knowledge graph is proposed. The effectiveness of the aid is demonstrated in real world experiments.

知识驱动的视障人士室内目标导航辅助设备

为了帮助视障人士提高生活质量,本文提出了一种新型的头盔形状的可穿戴辅助设备,用于帮助视障人士在室内场景中寻找物体。开发了一种基于可穿戴设备的目标-目标导航系统,该系统由对象关系先验知识(ORPK)、感知、决策和反馈四个模块组成。为了使辅助在不熟悉的环境中也能很好地工作,使用ORPK进行子目标推理,帮助用户找到目标目标。提出了一种利用场景图和知识图从未标记图像中学习ORPK的方法。在现实世界的实验中证明了该援助的有效性。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Cognitive Computation and Systems

Computer Science-Computer Science Applications

CiteScore

2.50

自引率

0.00%

发文量

39

审稿时长

10 weeks

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: