用于无人机自主飞行的全神经形态视觉和控制。

IF 26.1

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

摘要

生物传感和处理具有异步性和稀疏性,因此可实现低延迟、高能效的感知和行动。在机器人领域,用于基于事件的视觉和尖峰神经网络的神经形态硬件有望表现出类似的特性。然而,由于当前嵌入式神经形态处理器的网络规模有限,以及尖峰神经网络的训练困难,机器人的实现一直局限于低维感官输入和运动动作的基本任务。在这里,我们提出了一个完全神经形态的视觉到控制管道,用于控制飞行无人机。具体来说,我们训练了一个尖峰神经网络,它能接受基于事件的原始摄像头数据,并输出低级控制动作,以执行基于视觉的自主飞行。该网络的视觉部分由五层、28,800 个神经元组成,将接收到的原始事件映射为自我运动估计值,并通过对真实事件数据的自我监督学习进行训练。控制部分由单个解码层组成,通过无人机模拟器中的进化算法进行学习。机器人实验表明,完全学习过的神经形态管道成功地实现了从模拟到现实的转移。无人机可以准确控制自我运动,实现悬停、着陆和侧向机动--甚至同时偏航。神经形态流水线在英特尔公司的 Loihi 神经形态处理器上运行,执行频率为 200 赫兹,闲置功耗为 0.94 瓦,运行网络时的额外功耗仅为 7 到 12 毫瓦。这些结果说明了神经形态传感和处理技术在实现昆虫大小的智能机器人方面的潜力。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Fully neuromorphic vision and control for autonomous drone flight

Biological sensing and processing is asynchronous and sparse, leading to low-latency and energy-efficient perception and action. In robotics, neuromorphic hardware for event-based vision and spiking neural networks promises to exhibit similar characteristics. However, robotic implementations have been limited to basic tasks with low-dimensional sensory inputs and motor actions because of the restricted network size in current embedded neuromorphic processors and the difficulties of training spiking neural networks. Here, we present a fully neuromorphic vision-to-control pipeline for controlling a flying drone. Specifically, we trained a spiking neural network that accepts raw event-based camera data and outputs low-level control actions for performing autonomous vision-based flight. The vision part of the network, consisting of five layers and 28,800 neurons, maps incoming raw events to ego-motion estimates and was trained with self-supervised learning on real event data. The control part consists of a single decoding layer and was learned with an evolutionary algorithm in a drone simulator. Robotic experiments show a successful sim-to-real transfer of the fully learned neuromorphic pipeline. The drone could accurately control its ego-motion, allowing for hovering, landing, and maneuvering sideways—even while yawing at the same time. The neuromorphic pipeline runs on board on Intel’s Loihi neuromorphic processor with an execution frequency of 200 hertz, consuming 0.94 watt of idle power and a mere additional 7 to 12 milliwatts when running the network. These results illustrate the potential of neuromorphic sensing and processing for enabling insect-sized intelligent robots.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

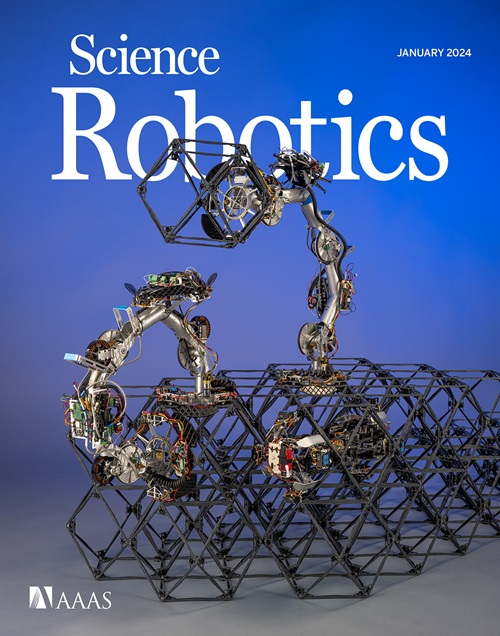

来源期刊

Science Robotics

Mathematics-Control and Optimization

CiteScore

30.60

自引率

2.80%

发文量

83

期刊介绍:

Science Robotics publishes original, peer-reviewed, science- or engineering-based research articles that advance the field of robotics. The journal also features editor-commissioned Reviews. An international team of academic editors holds Science Robotics articles to the same high-quality standard that is the hallmark of the Science family of journals.

Sub-topics include: actuators, advanced materials, artificial Intelligence, autonomous vehicles, bio-inspired design, exoskeletons, fabrication, field robotics, human-robot interaction, humanoids, industrial robotics, kinematics, machine learning, material science, medical technology, motion planning and control, micro- and nano-robotics, multi-robot control, sensors, service robotics, social and ethical issues, soft robotics, and space, planetary and undersea exploration.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: